Wanting forward, if the knowledge removing methods obtain additional growth sooner or later, AI firms may probably someday take away, say, copyrighted content material, personal data, or dangerous memorized textual content from a neural community with out destroying the mannequin’s capability to carry out transformative duties. Nevertheless, since neural networks retailer data in distributed methods which are nonetheless not utterly understood, in the intervening time, the researchers say their methodology “can’t assure full elimination of delicate data.” These are early steps in a brand new analysis course for AI.

Touring the neural panorama

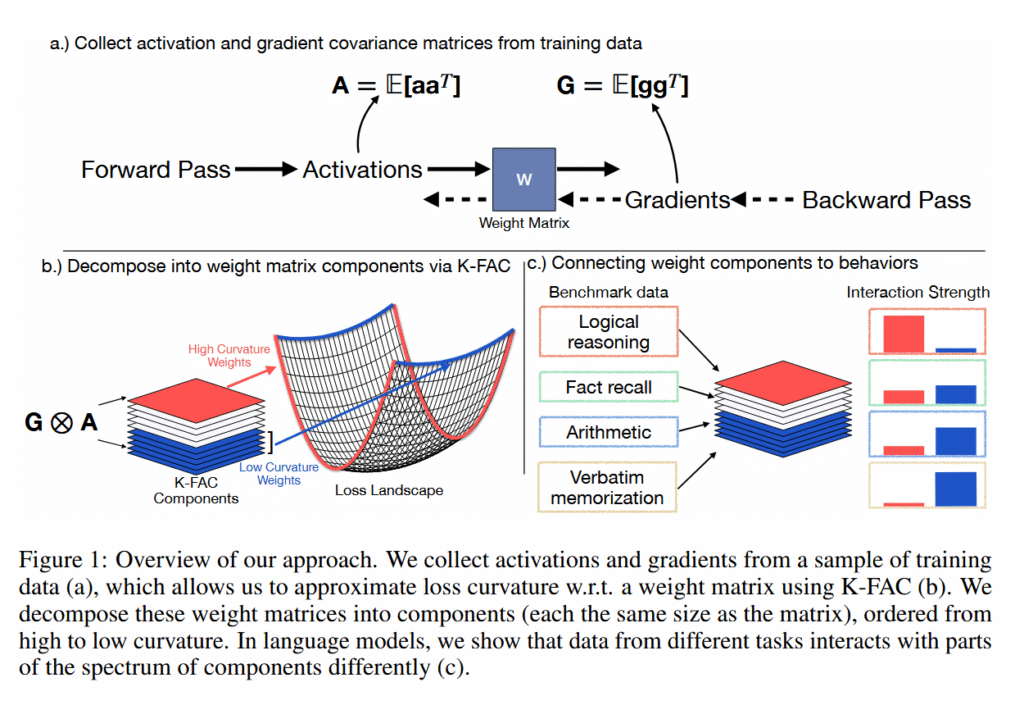

To know how researchers from Goodfire distinguished memorization from reasoning in these neural networks, it helps to find out about an idea in AI referred to as the “loss panorama.” The “loss panorama” is a approach of visualizing how fallacious or proper an AI mannequin’s predictions are as you regulate its inside settings (that are referred to as “weights”).

Think about you’re tuning a fancy machine with hundreds of thousands of dials. The “loss” measures the variety of errors the machine makes. Excessive loss means many errors, low loss means few errors. The “panorama” is what you’d see in case you may map out the error fee for each doable mixture of dial settings.

Throughout coaching, AI fashions basically “roll downhill” on this panorama (gradient descent), adjusting their weights to search out the valleys the place they make the fewest errors. This course of gives AI mannequin outputs, like solutions to questions.

The researchers analyzed the “curvature” of the loss landscapes of explicit AI language fashions, measuring how delicate the mannequin’s efficiency is to small modifications in several neural community weights. Sharp peaks and valleys symbolize excessive curvature (the place tiny modifications trigger huge results), whereas flat plains symbolize low curvature (the place modifications have minimal impression).

Utilizing a method referred to as Ok-FAC (Kronecker-Factored Approximate Curvature), they discovered that particular person memorized information create sharp spikes on this panorama, however as a result of every memorized merchandise spikes in a distinct course, when averaged collectively they create a flat profile. In the meantime, reasoning talents that many alternative inputs depend on keep constant reasonable curves throughout the panorama, like rolling hills that stay roughly the identical form whatever the course from which you strategy them.